Citizens, businesses, societal organisations and other government bodies in a democratic society governed by the rule of law have to be able to rely on government organisations using algorithms in a manner that society considers acceptable. That’s why more and more government bodies are looking at how they use algorithms. The big question, however, is how do you use them responsibly?

Last year, Berenschot’s lawyers, econometrists and management consultants advised various government bodies on using algorithms responsibly. This resulted in four important lessons, which we’re pleased to share with you.

Lesson 1: Rise of the algorithmic administrative state and network society means citizens’ legal position demands attention

During our law and public administration programme we and Jurgen Goossens (Professor of Constitutional Law at Utrecht University) examined how government bodies can respond to the challenges presented by the rise of the algorithmic administrative state and network society while continuing to reflect the values of a democratic society governed by the rule of law. Algorithms are being used to an ever greater extent, while the legislator is increasingly withdrawing and leaving it up to government to determine the applicable regulations. More and more often, government is also dealing with complex societal issues through public-private networks. The challenge then is how to continue protecting citizens’ legal position. Because how can Parliament ensure it is still able to perform its democratic and supervisory responsibilities? And how should government organisations themselves keep a grip on their use of algorithms? These are all crucial questions for the coming years.

Lesson 2: Procedural safeguards are vital for ensuring due care in court judgments on algorithmic decision-making

The rise of the algorithmic administrative state makes effective judicial review increasingly important. Research by Berenschot’s Wouter Verbeek into the procedural safeguards in place to ensure properly considered judgments on government bodies’ algorithmic decision-making found such safeguards to be in place for transparency, but to be lacking with regard to explainability and the principle of the duty of care. This can create a disbalance and mean citizens and interested parties are significantly less able to argue their case than the public body basing a decision on an algorithm. That is despite other domains (such as criminal law) and countries (including France) having effective methods for ensuring a fair hearing. And many of these could also be used with algorithmic decision-making in public administration. Wouter himself recommends:

- Announcing when decision-making will use algorithms;

- Requiring government bodies to have a public and independent expert report compiled before using algorithms in decision-making;

- Introducing a proportionality check to weigh up an algorithm’s explainability against the interests served by using the algorithm and its impact on the person(s) concerned;

- Providing further training for the judiciary.

Lesson 3: Barriers to and recommendations for using algorithms ethically

Research by Berenschot’s Imre Teunissen found that government bodies experience barriers in terms of societal, political and legal legitimacy and also organisational capacity. Sometimes, for example, the political world provides too much guidance, but sometimes too little. And considerations of a political nature then end up being dealt with by policymakers. An additional problem is the often inadequate communications and coordination between data scientists and policymakers. Using algorithms ethically also means being alert to psychological group dynamics, given that groupthink and divisions of responsibilities influence the extent to which individuals within an organisation are willing to express doubts or concerns about algorithms. Imre has four recommendations for policy and politics:

- Provide political guidance at a regional and national level so that complex political decisions are taken by democratically elected politicians;

- Measure the impact of using algorithms to see whether they actually help resolve societal problems;

- Ensure transparent and accessible governance of ethical considerations, signalling and coordinating between IT and policy;

- Build adaptivity into the processes involved in using algorithms because of how levels of support and ethical standards can change.

Lesson 4: Key moments in algorithm governance

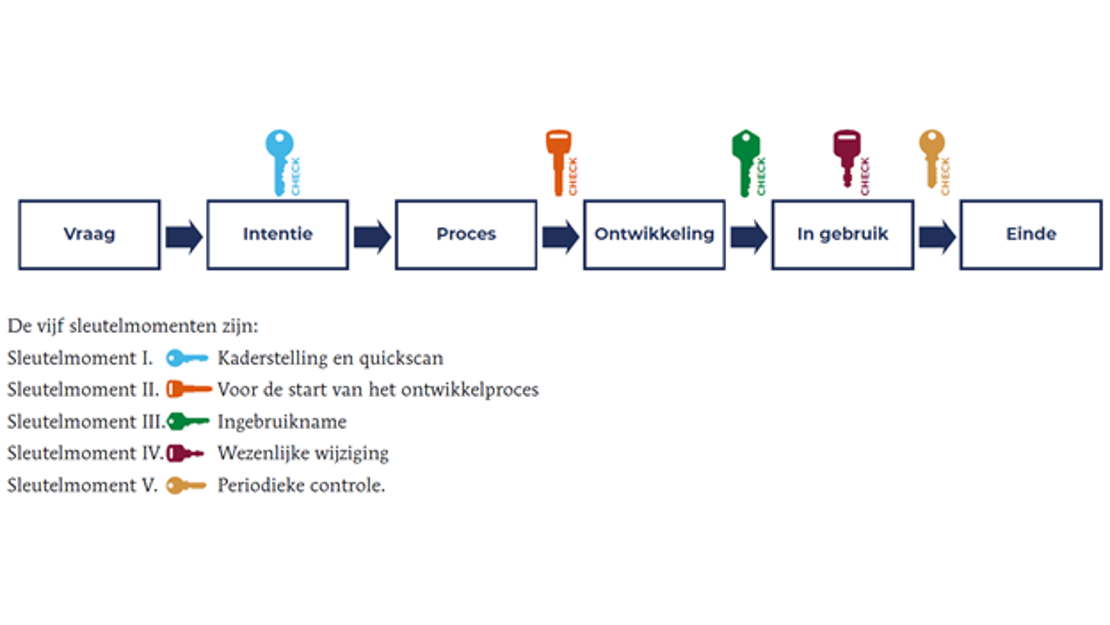

The set of governance guidelines we prepared for a consortium of government organisations includes five checks for ensuring properly structured governance throughout an algorithm’s lifecycle:

Issue Intention Process Development In use End

The five key moments are:

Key moment 1: Defining the framework and performing a quick scan

Key moment 2: Before the development process starts

Key moment 3: First use of the algorithm

Key moment 4: Substantial change

Key moment 5: Periodic monitoring

At each key moment, there are two critical issues:

- The democratic, legal and societal legitimacy of how the algorithm is used.

- A values-based management plan. To what extent does this plan (comprising a risk analysis, legal check and management measures) align (or still align) with the way algorithms are being used?

By discussing these key moments, we can decide what to focus on so as to keep a government body’s management plan up-to-date and ensure that account is taken of democratic, legal and societal legitimacy throughout an algorithm’s lifecycle.

Sparring with an expert?

Are you looking for ways to ensure your organisation uses algorithms responsibly? And would you like to exchange ideas with an expert sparring partner? If so, please contact us.